- Google Threat Intelligence Group (GTIG) published an AI Threat Tracker identifying five malware families with novel AI capabilities: FRUITSHELL, PROMPTFLUX, PROMPTLOCK, PROMPTSTEAL, and QUIETVAULT. Google Cloud

- PROMPTSTEAL marks Google’s “first observation of malware querying an LLM deployed in live operations,” used by Russia‑linked APT28 against Ukrainian targets. Google Cloud

- In coverage of the report, Ars Technica judged the five AI‑built samples “far below par” versus professional malware and “easily detected,” suggesting vibe‑coding still lags traditional development. Ground News

- Google’s Billy Leonard struck a measured tone: “This isn’t ‘the sky is falling, end of the world.’” Axios

What Google actually found

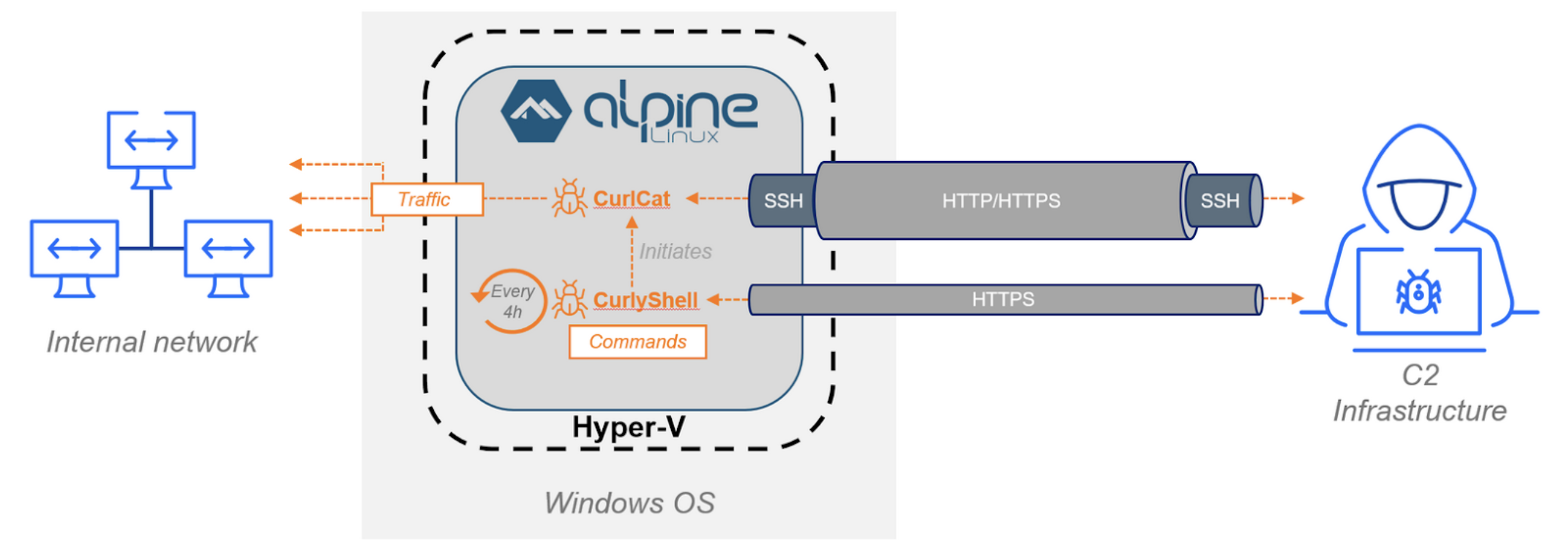

Google’s AI Threat Tracker describes a shift from AI as mere “copilot” to active runtime use inside malware. Two big firsts stand out:

- “Just‑in‑time” AI for self‑modifying code. PROMPTFLUX calls Gemini to regenerate its own VBScript to try to evade static signatures—an idea still in R&D. GTIG explicitly notes it cannot currently compromise victim systems, and Google says it disabled related assets. Google Cloud

- LLM‑driven commands in the wild. PROMPTSTEAL uses Hugging Face to query the Qwen2.5‑Coder‑32B‑Instruct model for one‑line Windows commands that it then runs for reconnaissance and data theft—the first time GTIG says it has seen malware query an LLM during live operations. Google Cloud

Google adds that adversaries are learning to “social‑engineer” AI guardrails (e.g., claiming to be CTF students) and that a maturing underground market now sells purpose‑built AI tools for phishing, malware, and vulnerability research. Google Cloud

GTIG: “Attackers are moving beyond ‘vibe coding’ and the baseline observed in 2024 of using AI tools for technical support.” Google Cloud

A number of reputable outlets summarized or expanded on these findings, including Axios, Cybersecurity Dive, The Record, and Help Net Security. Help Net Security

Meet the five AI‑linked malware families (what they do, and how “AI” is used)

- FRUITSHELL — Reverse shell (observed in operations).

A public PowerShell reverse shell that hard‑codes prompts intended to bypass LLM‑powered security analysis. Not a code‑generating LLM sample, but AI‑aware. Google Cloud - PROMPTFLUX — Dropper (experimental).

VBScript that calls Gemini to rewrite/obfuscate itself—even hourly in one variant—to try to evade AV signatures. GTIG: cannot currently compromise a device. Google Cloud - PROMPTLOCK — Ransomware (experimental / proof‑of‑concept).

A research prototype that uses an LLM to generate Lua scripts at runtime. Initially publicized as “AI‑powered ransomware, ” it was later confirmed by NYU Tandon as lab‑only PoC, not an active criminal tool. Help Net Security - PROMPTSTEAL — Data miner (observed in operations).

Queries an LLM (via Hugging Face) to generate single‑line Windows commands that gather system info and copy documents for exfiltration; observed with APT28 in Ukraine. Google Cloud - QUIETVAULT — Credential stealer (observed in operations).

Targets GitHub/NPM tokens, then leverages AI prompts and locally installed AI CLIs to search for additional secrets before exfiltrating to a GitHub repo. Google Cloud

Why the real‑world risk is limited—for now

Three threads temper the hype:

- Immaturity and errors. GTIG repeatedly calls out unfinished features, commented‑out code, and asset takedowns, noting PROMPTFLUX “does not demonstrate an ability to compromise” devices at present. Google Cloud

- Operational face‑plants. Google documents cases where actors leaked keys and C2 domains to Gemini while asking for help—self‑own goals that aided Google’s disruption. Google Cloud

- External analyses are skeptical. Ars Technica’s review concludes these five AI‑built samples were “far below par” and often easily detected, indicating vibe‑coded malware still lags professional tooling. Ground News

That measured view matches GTIG’s public stance. As Billy Leonard told Axios: “This isn’t ‘the sky is falling, end of the world.’” Axios

But here’s what is new—and why defenders should still care

- Runtime adaptivity. Even as prototypes, self‑modifying or prompt‑driven code challenges static detections and may shorten attacker iteration cycles. The Record from Recorded Future

- Lower barriers. Research indicates AI makes complex tasks easier for non‑experts, potentially seeding more experiments—even if today’s outputs are clumsy. A recent Veracode study also found ~45% of AI‑generated code contains security flaws, underscoring the uneven quality of “vibe coding.” TechRadar

- Underground markets are productizing AI. GTIG notes criminal AI toolkits that help with phishing, malware, and vuln research—an ecosystem trend worth monitoring. Google Cloud

Context: the PROMPTLOCK confusion (and why it matters)

In August, ESET announced discovery of PromptLock as AI‑powered ransomware. Days later, NYU Tandon clarified it was their controlled research prototype (“Ransomware 3.0”) uploaded to VirusTotal during testing—not an in‑the‑wild threat. As lead author Md Raz put it: “The cybersecurity community’s immediate concern…shows how seriously we must take AI‑enabled threats.” ESET

That episode captures 2025’s reality: AI malware proofs look flashy, but operational tradecraft still wins.

Practical takeaways for security teams

- Harden egress and watch the “AI edge.” Monitor and control traffic to AI APIs (Hugging Face, model endpoints) and AI CLIs; alert on unusual LLM query patterns from end‑user hosts. (PROMPTSTEAL/QUIETVAULT context.) Google Cloud

- Treat prompts as data. Scan for embedded prompts and prompt‑generated scripts on endpoints; don’t assume code with comments like “CTF” is benign. (GTIG notes guardrail‑bypass pretexts.) Google Cloud

- Prefer behavior over signatures. Self‑regenerating malware demands behavioral detection (process creation chains, LOLBins, persistence writes to Startup, anomalous exfiltration) over static hashes. (PROMPTFLUX patterns.) Google Cloud

- Exploit attacker AI mistakes. Hunt for hard‑coded API keys, LLM prompts, and logs (GTIG mentions %TEMP%\thinking_robot_log.txt) that can reveal TTPs and infrastructure. Google Cloud

- Keep perspective. As Google cautions, this is early. Level‑headed prioritization beats panic. Axios

The bottom line

Google’s new tracker does confirm something new: malware families experimenting with LLMs at runtime. But the current wave looks immature, error‑prone, and often self‑defeating—more a signal of where offense wants to go than a crisis already here. Or, as GTIG frames it, attackers are only now moving beyond “vibe coding.” Google Cloud

Further reading / sources

- Google: “Threat actors misuse AI to enhance operations” (GTIG overview). blog.google

- Google Cloud: GTIG AI Threat Tracker (full report and case studies). Google Cloud

- Axios: AI‑powered malware is here (interview with Google’s Billy Leonard). Axios

- Cybersecurity Dive: AI‑based malware makes attacks stealthier and more adaptive. Cybersecurity Dive

- The Record: New malware uses AI to adapt during attacks. The Record from Recorded Future

- Help Net Security: Google uncovers malware using LLMs to operate and evade detection. Help Net Security

- Ground News (summary of Ars Technica’s analysis that the samples were “far below par”). Ground News

- NYU Tandon: Ransomware 3.0 (PromptLock research clarification and quotes). NYU Tandon School of Engineering

Correction note (context): PROMPTLOCK is not an active criminal tool; it’s a research PoC later included by Google as an experimental example of AI‑assisted malware techniques. NYU Tandon School of Engineering